Four weeks in, the content engine is real.

The work is shipping. Some of it is landing. Some of it is not.

I am still unemployed, which means the stakes in this experiment are real.

TechThatMattRs is a public content experiment I’m running while job searching in technical security PMM. The goal is to build a visible body of work that shows how I think, not just what I’ve done.

What follows is less a tidy retrospective and more a stitched-together log from the last two weeks: what shipped, what slipped, what the numbers actually said, and what the workflow revealed.

The whole thing has started to feel less like a good idea and more like a real job under real pressure. Some parts of the toolchain held true. Some broke. Some turned out to be more useful than elegant. The resulting numbers told the truth either way.

In Week Two, the toolchain experiment was already part of the story. This cycle was where it stopped being a setup detail and started affecting the work directly. Four weeks in, that part is clearer, not blurrier. The tools are involved. The handoffs matter. The workflow matters. The person carrying the workflow matters too.

That made this cycle more useful than easy or fun.

What shipped, what slipped

The output layer is not hypothetical anymore. A real run of work went out over this stretch: Defending Against Modern Cyber Threats: A Day in the Life of Security Operations, Week Two of Building a Content Engine in Public, Approved Tool, Expanding Agent: The Ownership Model That Works, When Your MDM Becomes the Weapon, Agent Inventory and the Agent Register: The Control You Need Before Agent Sprawl Becomes Identity Debt, and, finally, Agents Are Identities: The New Control Plane for Enterprise AI after running late.

That last one is the one worth lingering on.

The most important miss this cycle was not a bad idea or a dead draft. It was a strong piece that slipped past its intended publish window. The thesis was there. The structure was there. The framework was there. The sources were there. The image was chosen. It still did not ship on time.

It did get out. That matters.

Shipping late is better than not shipping. It is still not the same as shipping on time.

A couple of quieter misses matter too. The back-catalog spotlight task for this cycle did not go out. That sounds minor until you remember what those posts are supposed to do. They extend reach without requiring a net-new draft. They give the system leverage. Missing them means preserving the workload while losing some of the surface area.

That is not catastrophic. It is still useful to notice.

The work also did not slip in a vacuum. There is a version of this story where the only variables are process, tooling, and output. That is not this version. This cycle ran alongside helping family through a complicated move, parenting moments that required more negotiation than some enterprise rollouts, and the general drag that comes with being unemployed longer than planned.

None of that is unique. Most people reading this are carrying something.

The outcome is still the same. The work slipped.

Pretending those conditions do not matter would make the post less honest, not more.

What the numbers actually say

The numbers this cycle are not impressive. That is fine. The useful part is that they are clear enough to say something real.

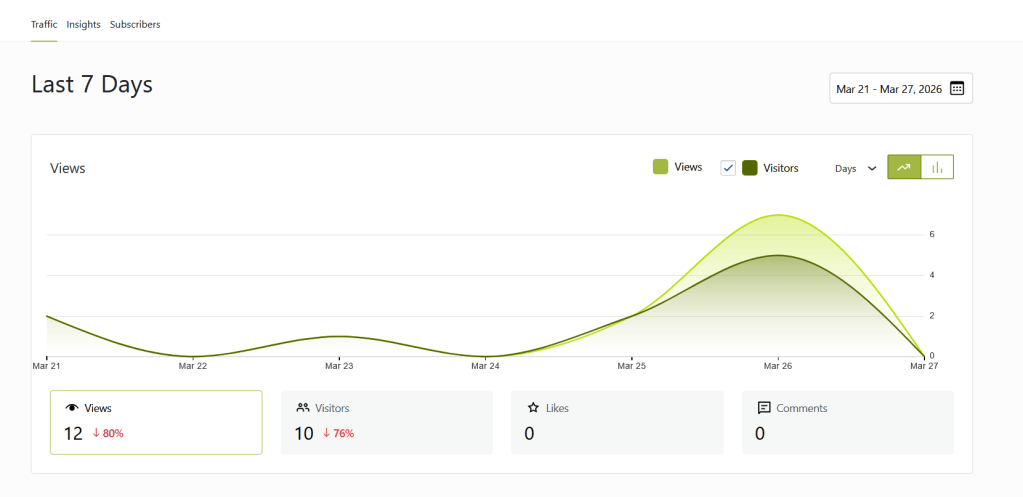

Over the last seven days, LinkedIn showed 558 impressions, 299 members reached, 8 total engagements, and 0 sends. WordPress showed 12 views and 10 visitors for the week, down 80% and 76% from the prior period, with 0 likes and 0 comments.

The chart tells the story: a spike on the days the technical posts shipped, then a drop to zero. Those are not vanity numbers. Those are operating numbers.

The earlier pattern is still visible in the contrast cases. The Week Two post did 197 impressions, 36 reactions, and 3 comments on LinkedIn. The Agent Register post did 94 impressions and 0 engagement. The Stryker / MDM post did 50 impressions and 4 reactions on LinkedIn, then effectively flatlined on-site. On WordPress, the Week Two post still leads with 35 views, and the Field Notes Index is still doing useful work as the front door at 19 views.

That is enough data to stop pretending this is just an attention problem.

Where the system broke

The system did not lose reach. It lost transfer.

Attention happened. Movement did not.

Right now, the machine has a few clear layers. LinkedIn is the conversation layer. WordPress is the depth layer. Asana is the planning and accountability layer. The system only works if the handoff between those layers is clean.

This cycle, it was not.

A few weeks ago, the strongest signal in the system was sends. Not reactions. Not vanity reach. Sends. If somebody sends a post to another person, another room, or another chat, that means something. That is worth carrying. That is trust.

This cycle, that signal went quiet.

Impressions are reach. Reactions are acknowledgment. Sends are trust.

This week, trust did not show up in the data the way it did before.

That does not automatically mean the work is weak. It is worth noting that organic reach on LinkedIn has been declining broadly, with some analyses putting views down 50% year-over-year, so part of what the numbers reflect is a platform headwind, not just execution variance. It does mean the work is not moving between layers. And if the content is not moving, the system is not really working yet, no matter how active it looks at the top. LinkedIn analytics will show part of that picture. They will not show all of it.

The content got stronger. The posts got weaker.

This one took a minute to admit because it sounds backwards until you see it happen.

The recent posts were more complete. More structured. More technically resolved. They explained the problem clearly. They made the argument cleanly. They often did exactly what I wanted the blog itself to do.

That sounds like progress. It is not.

Posts that resolve the idea too fully on LinkedIn remove the reason to click. They also remove the reason to share.

One of the notes in Asana from this cycle says it plainly about an earlier post: the LinkedIn version was too complete. The reader got the answer on-platform and did not click through.

That lines up with what the numbers did this week.

Being right is not enough. Being clear is not enough. The post has to create a reason to move.

That is the thing I am relearning in public right now.

The content itself is stronger. The social packaging got weaker.

That is not a fun lesson. It is still a useful one.

The Stryker post gave me a process rule

The Stryker blog itself was good. It was topical content built on about a week of research and development, and it landed while other real analysts were publishing their takes. The underlying piece had the right ingredients. The first LinkedIn post did not. Six hours in, it was pretty obvious it had flopped.

I approved that version quite late, when I was already tired and not paying close enough attention to what it was saying or how it was saying it. It was not terrible. It was just okay. And okay was not good enough for that moment.

That post should have hit, but the LinkedIn algorithm knew it was meh, and the numbers showed it.

So I deleted that one and wrote a fresh new social post the same day, this time using GPT instead of Claude. Within an hour, the second version had already doubled the impressions of the first and driven three views to the actual article.

That is not a clean laboratory comparison. It is still a useful signal.

The takeaway for me was that I can absolutely bottleneck a good piece of work by approving mediocre promotion copy when I am too tired to be sharp about tone, framing, and force.

So this is a rule now: do not approve social copy at 2 a.m. and expect the platform to carry a post just because the underlying blog is strong.

That is process control in action.

The toolchain experiment

This cycle was less about choosing tools and more about trying to make the workflow better.

I was using automation and AI to reduce friction, speed up the parts that should move faster, and make the whole process a little less manual: drafting faster, reviewing better, keeping the task flow tighter, and reducing some of the drag that comes with doing all of this solo. Once the work got real, the useful question became which parts of the system were actually improving and which were just adding noise.

GPT ended up doing most of the real drafting heavy lifting this cycle. Claude was more useful as a second set of eyes than as a primary writing engine. That included statement vetting, some market-level observations, and Asana support.

That mismatch was useful in its own way. It made the limits obvious faster. It also pushed me to get much more intentional about model memory and context management. The better I got at shaping Matt-context cleanly, the more the output started sounding like me instead of sounding like a tool trying to sound like me.

Asana remained the backbone, and the arrival of AI Teammates in tools like Asana is part of why this workflow question is getting more real, not less. Google Docs earned its place as the neutral handoff layer between tools that do not need to know what the other one did as long as the work stays visible.

I have been doing this on a zero-dollar budget. I pay for GPT Plus and an annual ACDSee 365 Home Plan because ACDSee has been part of my image toolchain since the mid-90s, and LUXEA Pro Video Editor is the video side of that stack. For AI images, I use a free image-generation workflow built with Perchance rather than a paid image tool. Everything else is free-tier enterprise apps: WordPress, Asana, Slack, etc. That constraint shaped the workflow as much as the tools did.

That part worked.

The edges were messier.

Slack Canvas was not worth paying for, but Claude kept trying to use it anyway. Some things worked cleanly in one place and fell apart in another. The closer the workflow got to “this should be work, no issue,” the more likely reality was to show up with a connector limit, a plan restriction, or an OAuth tantrum.

None of that made the experiment less useful. It made the tradeoffs easier to see. Some parts of the system were helping. Some were adding drag. Some only looked useful until the work got real.

I was not trying to optimize around one perfect tool. I was trying to build a workflow that could keep moving even when one part of the stack got weird, expensive, or limited.

The finishing problem

Drafting is working.

Ideation is working.

Structure is working.

Finishing is uneven.

The piece that slipped this cycle proves it. Agents Are Identities was late not because the idea was weak, but because getting something from a strong draft with framework, sources, image, and direction to clean final, laid out, published, linked, and promoted is a different kind of work than getting it from zero to eighty percent.

A lot of content systems die in that last stretch.

That does not make the miss less real. It makes the miss more informative.

Drafting and shipping are not the same skill.

This cycle made that painfully clear.

The smaller misses tell the same story in quieter ways. Back-catalog spotlight tasks are not glamorous, but they matter. They extend distribution without requiring new concept work. When they slip, the system loses leverage.

Some misses are glamorous. Some are quiet. The quiet ones count too.

The backlog is not empty. The pipeline is not blocked. The issue is what gets across the line.

That is the work now.

Proof of work under real conditions

One of the core hypotheses behind this whole project was that a visible body of work would tell a stronger story than another polished paragraph on a résumé.

Not because résumés are useless. Because they are compressed. They tell people what you did. They do not always show them how you think.

This cycle was the first time that the hypothesis started to feel less theoretical – and I started to get some interviews, none of which have panned out, but traction, which is better than last month, when I had none.

I am not pretending I have perfect attribution on that. I do not. I am also not pretending that every recruiter conversation came from a blog post. That would be silly.

But a pattern is starting to show. Since mid-December, my Washington job-search log has 672 entries in it. This project has not made the market easier, but I am getting better visibility. Relevant content is getting seen by the right kinds of people. The work is functioning as more than output. It is functioning as visible reasoning.

That was the point.

The signal is still early. That is fine. Early signal is still a signal.

What changed this cycle

A few things moved from observation to rule.

Sends remain the strongest public trust signal. If that number goes quiet, I pay attention.

Complete LinkedIn posts kill click-through. The social layer has to create the question, not resolve it.

Reactive content gets a sleep cycle.

Google Docs is not glamorous, but it has earned its place as the neutral handoff layer.

The workflow is now officially multi-tool by design, not by accident.

And maybe the most important one: the system has to work under imperfect conditions or it is not a system. It is a mood.

That applies to the tools. It also applies to the person carrying the week.

What’s next

The next obvious question is whether I can fix the transfer problem without flattening the writing into bait.

That means sharper hooks, less early resolution on LinkedIn, cleaner handoffs into the blog, and a little less urge to solve the whole problem inside the social post itself.

I am also watching the finishing layer. I do not need more ideas right now. I need stronger last-mile discipline.

The next format shift in the plan is video, and that deserves an honest line item too. Stage and workshop delivery are familiar territory for me. Camera recording is not. A room gives you a signal. A camera gives you a lens and your own thoughts getting louder. That does not mean video stays optional. It does mean I need to treat it like a real capability shift, not a checkbox in the content calendar.

I am also not looking to solve this by collecting more attention channels. The current loop is enough to diagnose. LinkedIn is already producing reach. WordPress is already the canonical depth layer. Adding more surfaces before the handoff works would just create more places for the same transfer problem to show up.

Substack is interesting for exactly that reason, but later, not now. Its publishing and following model makes sense as a distribution and retention layer, not a magic fix. Another surface before the current transfer problem is fixed would be expansion in the wrong order.

That is not expansion. That is dilution.

So for now, the job is not to be everywhere. The job is to make one channel work end-to-end.

What to do with this

If you are building your own content system, this is the part I would steal: track the real numbers, name the transfer problem early, and do not expand to new channels until one loop actually works end to end.

Do not confuse activity with movement. Do not confuse more surfaces with more traction. And do not assume a good draft is close enough to done.

The system is still rough. Some parts of it are working. Some are not. The numbers are still small. The misses are real. The conditions are real too.

That is fine.

The useful part is clearer now.

The machine can produce output. That is no longer in question.

The machine is learning how to finish. That part is uneven.

The machine is not yet reliably producing movement.

The writing can be strong and still fail to transfer. The workflow can be smart and still get hung up in the last mile. The calendar can be full and still leave the most important piece running late.

That is what this cycle clarified.

The workflow has to survive tool limits. The process has to survive real life. The person running the thing still has to survive the week.

That is the experiment.

This cycle is the first one that really tested it.

Leave a comment