Shadow agents are the new shadow IT.

Not in a cute way.

Not in a buzzword way.

In the old, familiar, “we’ve seen this episode before, and it ends with cleanup work nobody budgeted for” way.

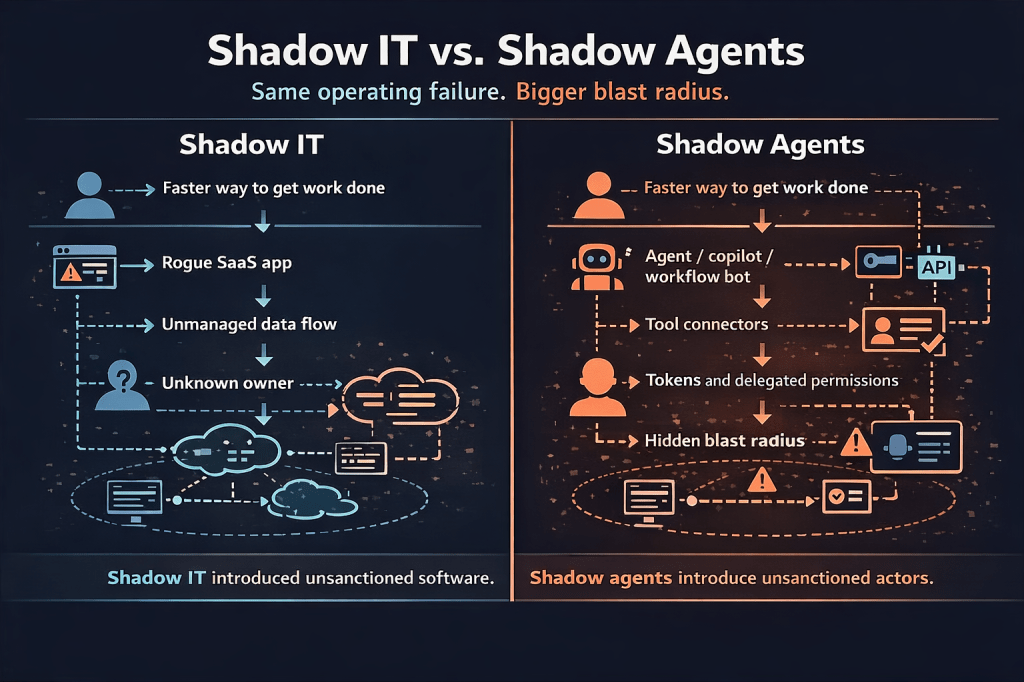

A few years ago, shadow IT meant unsanctioned SaaS, rogue cloud storage, a team buying a tool without review, or a workflow that bypassed the normal control path because it was faster and easier. The risk was never just the app. It was the unmanaged identity, the invisible data path, the missing owner, and the fact that security only found out after adoption.

Now replace “app” with “agent.”

That’s the shift.

And here’s the uncomfortable part:

Agents are worse.

A shadow SaaS app could expose data.

A shadow agent can expose data, invoke tools, make decisions, trigger workflows, and act with delegated authority that no one modeled clearly enough.

That is where current external guidance is starting to converge. Cloudflare’s shadow AI explainer frames shadow AI as unauthorized and untracked AI use that expands the attack surface, while Okta’s shadow AI guidance goes a step further and explicitly calls out autonomous agents and non-human identities as part of that sprawl.

If you’ve read my earlier posts like Beyond Human: Securing Agentic AI and Non-Human Identities in a Breach-Driven World, What Are Non-Human Identities?, Non-Human Identity Management, or the NHI Ownership Security Checklist, you already know where I land on this:

If it can read data, call tools, or take action, it is not “just automation.”

It is an identity problem with better marketing.

Shadow IT was never really about apps

The useful mental model here is simple:

Shadow IT was never really about apps. It was about unsanctioned capability.

That’s the bridge to shadow agents.

The old pattern looked like this:

- Somebody found a faster way to get work done

- Governance came later, if at all

- Identity and access got treated like implementation details

- The organization discovered the risk after adoption, not before it

Now the same pattern is showing up with agents:

- Somebody launches a support agent, research assistant, RevOps copilot, or workflow bot

- It gets connected to Slack, docs, ticketing, CRM, or code repos

- It accumulates scopes, tokens, and delegated access

- Everyone talks about productivity

- Almost nobody maps the blast radius until something weird happens

That’s why the security conversation around agents has to stay grounded in boring things:

- Inventory.

- Ownership.

- Boundaries.

- Lifecycle.

You can call it AI governance if you want. Fine. But if the program can’t answer who owns the agent, what it can touch, what identities and secrets sit behind it, and how to disable it fast, then it is still just shadow IT with a new user interface.

That’s also where the standards are lining up. OWASP’s Top 10 for Agentic Applications 2026 calls out risks like tool misuse, goal hijack, and identity and privilege abuse. OWASP’s Securing Agentic Applications Guide 1.0 pushes toward practical controls for tool-using, multi-step autonomous systems. The industry is noticing the same thing: agents collapse identity, automation, and workflow risk into one messy problem.

The real failure mode

Now let’s make it real.

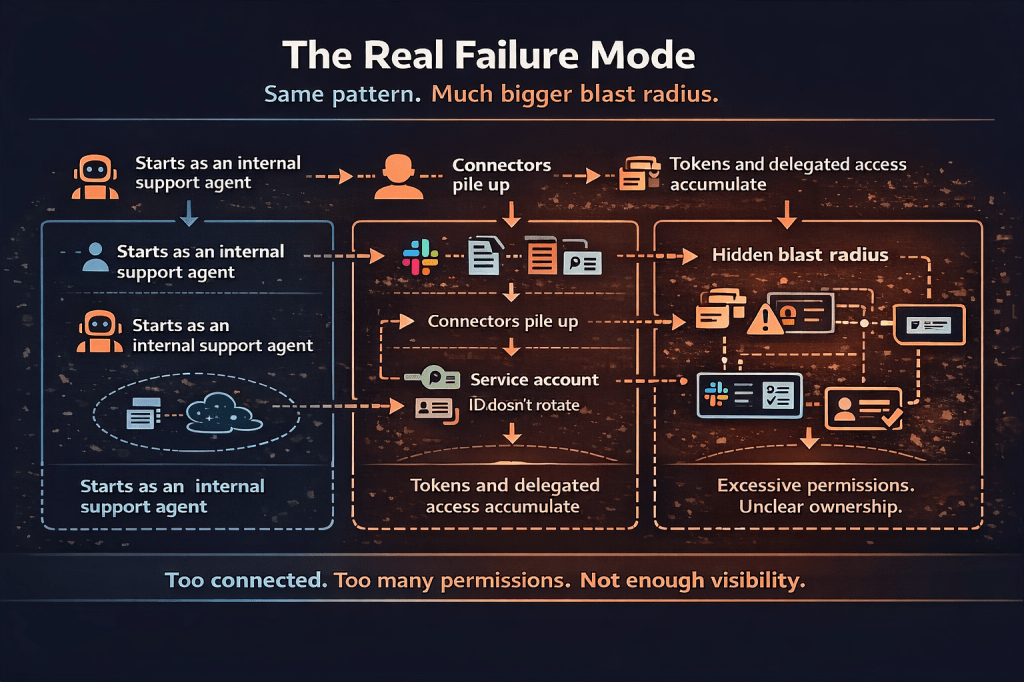

A team launches an internal support agent. Nothing wild. It can summarize tickets, read docs, pull account context, open follow-up tasks, and post into a team channel. It’s useful quickly, which is exactly why this stuff spreads.

Then the connectors pile up.

The agent gets access to a shared knowledge base. Then ticketing. Then CRM. Then, a lightweight approval workflow. Then, a service account nobody rotates because “we’re still piloting.” Then a copy of the prompt lives in one place, the token lives in another, and the actual effective permissions live somewhere nobody has looked at in one view.

That is where the problem starts.

Not when the model hallucinates.

Not when somebody says “agentic.”

When the agent becomes an actor with real permissions and no adult supervision.

I wrote about the data side of this in Copilot, Can You Keep a Secret? and the access side of it in Identity Is the New Control Plane. Same principle. Different wrapper. Over-permissioned data plus AI retrieval is dangerous. Over-permissioned tools plus AI action is worse.

This is also where the market is telling on itself in useful ways. Microsoft Entra Agent ID exists because the old identity constructs are not enough for agents. Okta’s newer agent security material is explicitly talking about treating AI agents as primary identities with lifecycle, permissions, and oversight.

You do not have to buy either product story to notice the signal. The industry is moving toward agent identities as a first-class control problem.

The framework

This is where I’d put up the simplest slide in the deck:

- Find it.

- Name it.

- Limit it.

- Review it.

That’s the framework.

Find it

You can’t govern what you can’t see. Shadow agents get discovered the same way shadow IT always did: late, sideways, and usually by accident. Start with a register. Every agent, bot, copilot, orchestration flow, or automation with delegated access goes on the list.

Name it

Every agent gets a real human owner. Not “Platform Team.” Not “AI Innovation.” A person. If nobody owns it, it does not belong in production.

Limit it

Separate read from write. Use narrow scopes. Use short-lived tokens where the platform supports them. Put approval gates in front of sensitive actions. If the same identity can read sensitive data and take irreversible action across tools, congratulations, you built a privilege bundle.

Review it

Agents need a lifecycle. Access review. Rotation. Disablement. Offboarding. Exception handling. If the owner leaves, the agent should not become a digital ghost with access to half the company.

That structure maps well to NIST’s AI Risk Management Framework and the Generative AI Profile. The point is not to admire the framework. The point is to use it to keep pilot magic from becoming production debt.

What to do in the next 90 days

Now bring it down to earth.

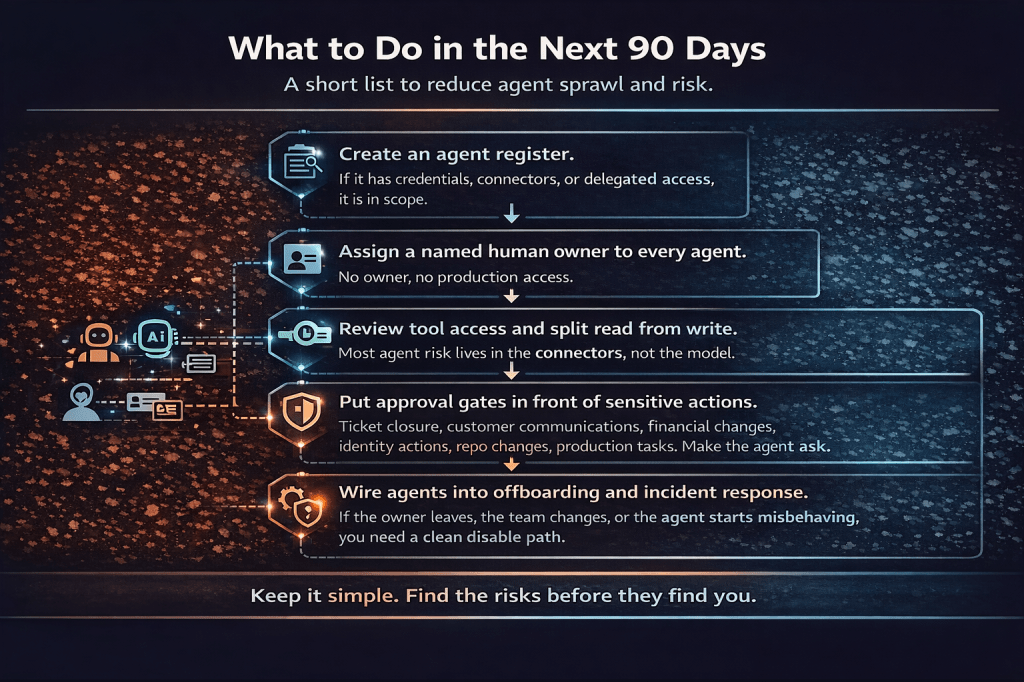

If you walk out of the room and do nothing else, do these five things in the next 90 days:

1. Create an agent register.

If it has credentials, connectors, or delegated access, it is in scope.

2. Assign a named human owner to every agent.

No owner, no production access.

3. Review tool access and split read from write.

Most agent risk lives in the connectors, not the model.

4. Put approval gates in front of sensitive actions.

Ticket closure, customer communications, financial changes, identity actions, repo changes, production tasks. Make the agent ask.

5. Wire agents into offboarding and incident response.

If the owner leaves, the team changes, or the agent starts misbehaving, you need a clean disable path.

That is not flashy. It is also how you stop shadow agents from becoming the next thing everybody pretends they always knew was risky.

And yes, identity still matters here in the boring old way. CISA’s phishing-resistant MFA guidance is not about agents specifically, but it matters the second an agent workflow hands control back to a human for approvals, escalations, or privileged actions. Weak human identity controls plus autonomous workflows are not innovation. It is a faster incident path.

Final Thoughts

Shadow IT used to be the app nobody approved.

Shadow agents are the identities nobody governs.

Same movie. Faster actors. Bigger blast radius.

If an agent can read data, call tools, make decisions, or take action, it needs the same things every other powerful identity needs:

- Ownership.

- Boundaries.

- Lifecycle.

- Review.

Because if it doesn’t have those, it is not innovation.

It is shadow IT in a smarter suit.

Leave a comment